Key takeaways

- HOP is a leadership lens: interpret work, error, and pressure in real operations.

- Why blame persists: urgency + scrutiny + incomplete info pushes brittle decisions.

- Learning beyond compliance: learn from normal work before harm occurs.

- Real-world examples: shipyards across Brazil, Singapore, China, Malaysia.

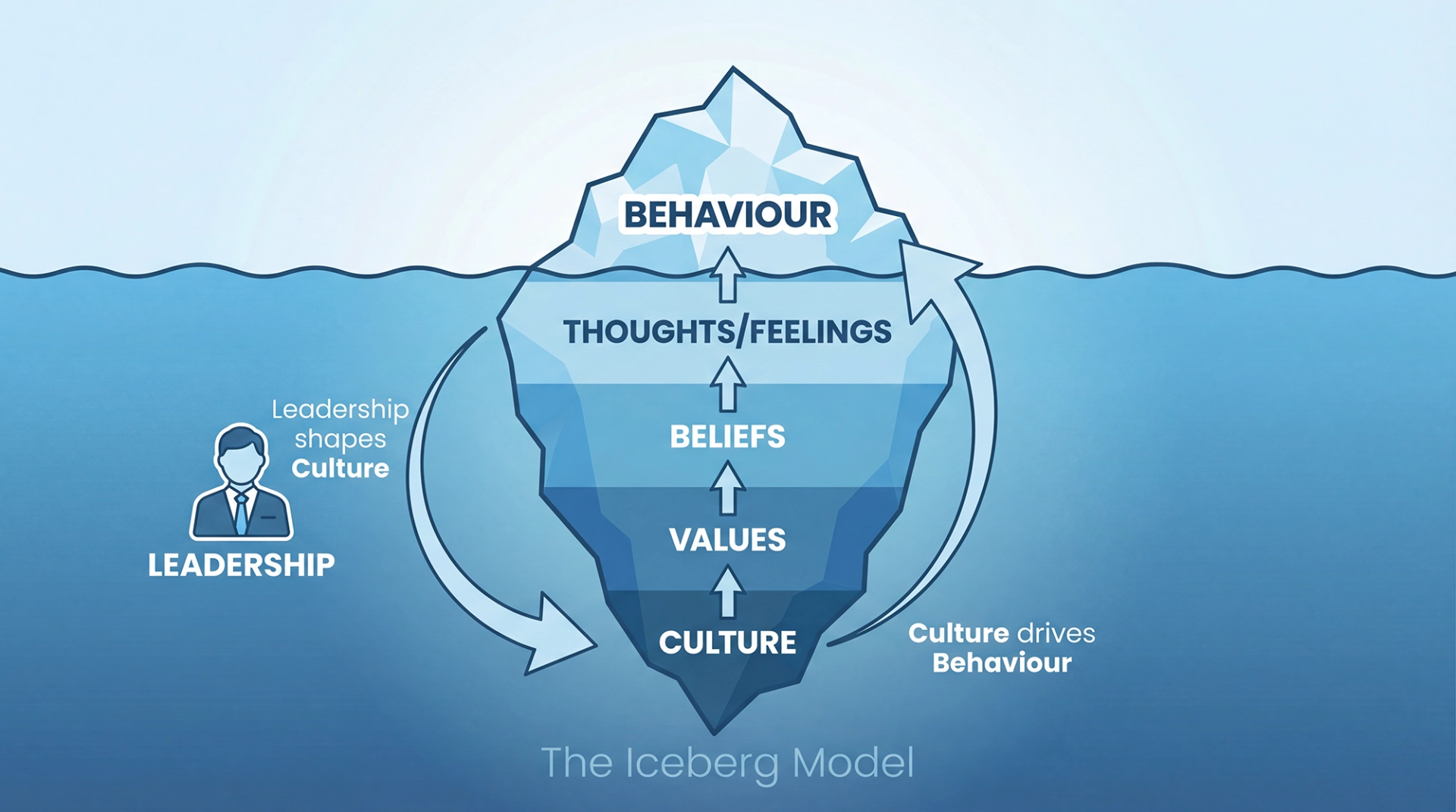

- Culture chain: culture → values → beliefs → thoughts/feelings → behaviour.

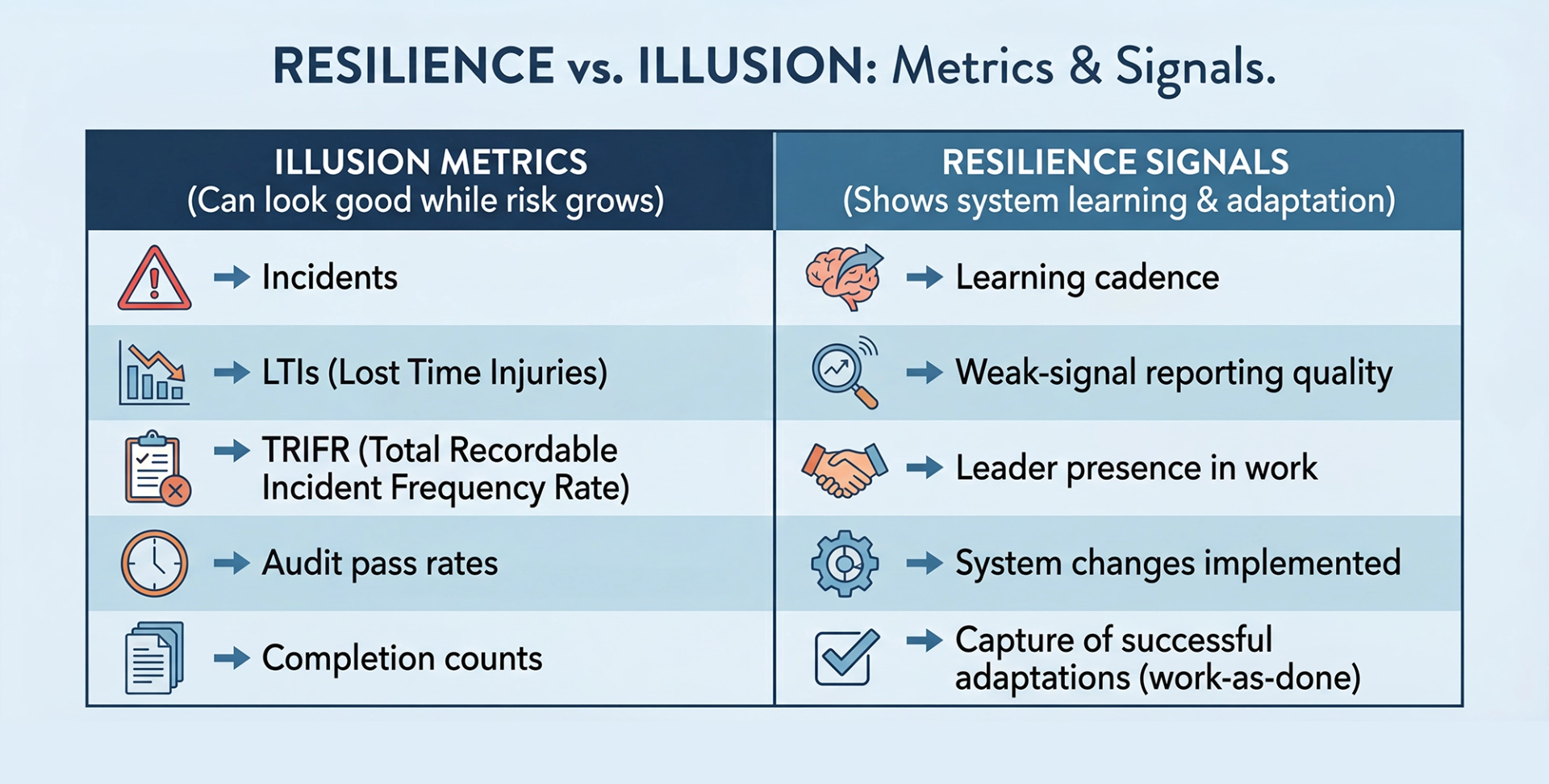

- Resilience over illusion: good metrics can hide fragile systems.

What Human and Organisational Performance Really Means in Practice

Human and organisational performance (HOP) is often described as a safety philosophy. In practice, it is something more useful than that. It is a decision-making lens that helps leaders interpret work, error, and risk in complex systems so they can make better choices before harm occurs. [4][5]

In my work as a human and organizational performance consultant, I see the same pattern repeatedly: organisations invest heavily in rules, procedures, and metrics, yet still struggle to understand why incidents occur, why warning signs are missed, and why well-intentioned people make poor decisions that look obvious in hindsight. [4][5]

HOP starts from a different set of assumptions:

- Error is normal

- Blame fixes nothing

- Learning and improvement are vital

- Context drives behaviour

- Leadership shapes culture

- Capacity and resilience matter as much as controls

These are not slogans. They are observable truths about how work actually happens in high-risk environments. [4][5]

“The absence of incidents is not evidence of safety. It is evidence that nothing has gone wrong yet. Right?”

Human and organisational performance challenges leaders to rethink how systems, pressure, and context shape decisions long before harm occurs.

This article explores what those principles look like outside the classroom, in shipyards, offshore projects, and leadership teams operating under real commercial and operational pressure.

Why Blame Persists in High-Risk Industries

Blame persists because, under pressure, it feels efficient.

When something goes wrong, leaders are often operating with:

- Compressed timeframes

- Commercial and schedule pressure

- Regulatory scrutiny

- Incomplete or conflicting information

In those conditions, organisations default to simple explanations: Who made the mistake? Why didn’t they follow the procedure? Why didn’t someone speak up?

The problem is that blame creates brittle systems.

Brittle systems often look strong due to:

- Low incident counts

- Long LTI-free streaks

- Extensive procedures and controls

Brittle systems rely on perfect human performance. When something unexpected happens, they fail hard.

A critical misconception sits at the centre of this thinking:

The absence of incidents is not evidence of safety. It is simply evidence that nothing has gone wrong yet.

From a safety culture consultant perspective, blame doesn’t improve performance, it narrows learning. It stops organisations from seeing how pressure, context, and system design shape everyday decisions. [4][5]

HOP as a System Lens, Not a Safety Program

Human and organisational performance is not a program to roll out or a checklist to complete. It is a system lens that changes how leaders interpret information. [4][5]

The five principles of HOP describe how complex systems actually behave:

Error Is Normal

Human error is not a defect to eliminate. It is a predictable feature of human work in complex environments. [4][5]

Blame Fixes Nothing

Blame may feel satisfying, but it adds no new understanding of how the system allowed failure to occur. [4][5]

Learning and Improvement Are Vital

Sustainable performance comes from learning before harm, not just after incidents. [4][5]

Context Drives Behaviour

People do not act randomly. Decisions make sense within the context in which they are made. [4][5]

Leadership Shapes Culture

What leaders say, tolerate, reward, and ignore becomes the culture of the organisation. How Leaders Responde to Failure Matters. [4][5]

Underpinning all of this is a sixth, often overlooked truth: capacity and resilience must be deliberately built. Controls alone do not create safety. [4][5]

Learning and Improvement Beyond Compliance

Many organisations equate learning with compliance activities:

- Audits

- Investigations

- Corrective actions

- Updated procedures

These have value, but they are lagging mechanisms. They often rely on something going wrong first. [5]

HOP shifts attention toward learning from normal work:

- How people adapt successfully

- How trade-offs are made under pressure

- How systems support or constrain good decisions

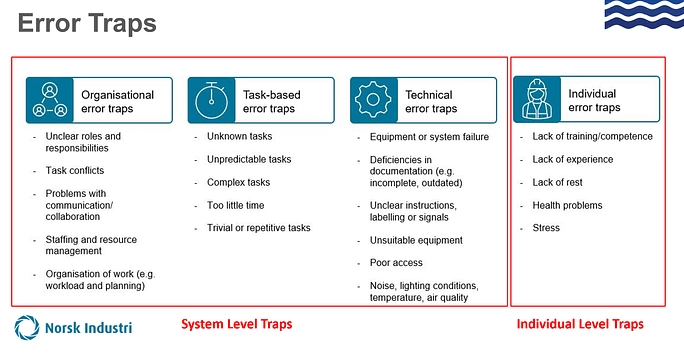

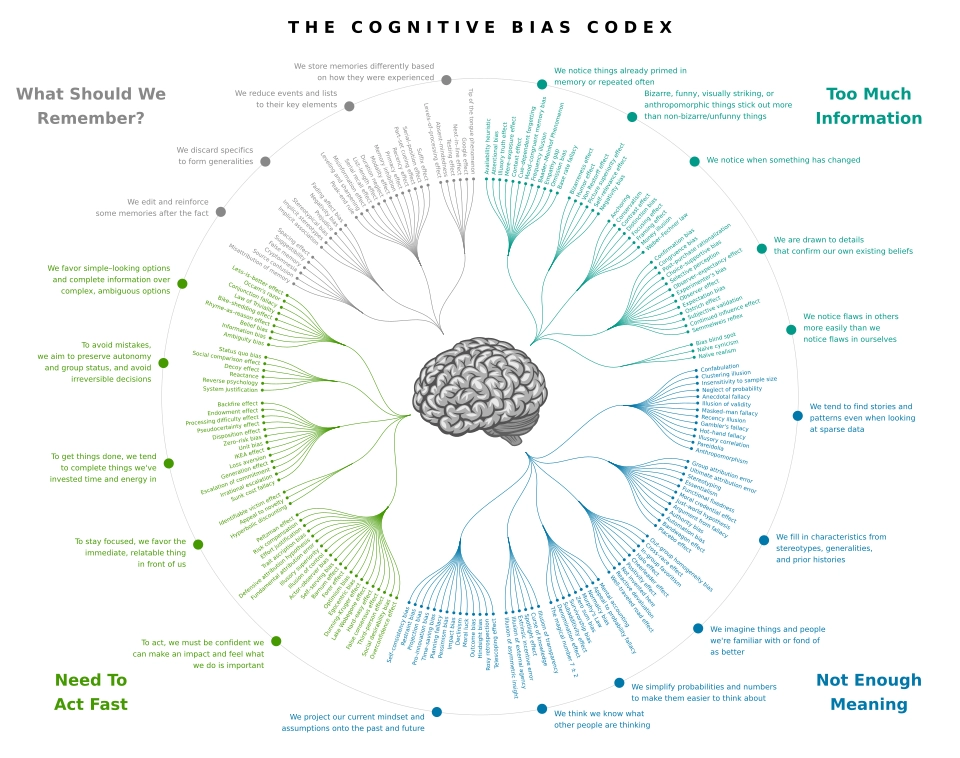

This matters because risk increases when:

- There is urgency

- Information is incomplete

- Information overload sets in

- Cognitive shortcuts take over

Reference: Cognitive Bias Codex (Wikimedia Commons)

When leaders and teams are fixated on one target, a deadline, a metric, a single risk narrative, they can miss what changes in front of them. That is the key insight from the research surrounding of inattentional blindness: attention is a limited resource, and what you focus on determines what you miss. [1]

Under these conditions, even highly experienced people are vulnerable to bias and pattern-matching. Proactive learning cycles help organisations surface weak signals before they escalate. [5][6]

When Work Succeeds by Deviating (and the System Never Learns)

One of the most important (and most missed) learning opportunities is this: work is often done differently than it is anticipated. Workers adapt to what the system didn’t account for — and those adaptations are frequently what prevents failure. [4][5]

The risk builds when the organisation never captures those “successful deviations” and feeds them back into how work is designed, resourced, and planned. Over time, the system assumes the written method is workable — while success depends on invisible trade-offs happening in real operations. [5]

- Work-as-envisaged: how the process is expected to run (procedures, plans, assumptions)

- Work-as-done: what people actually do to succeed (adjustments, workarounds, local expertise)

- HOP move: treat adaptations as data — then improve the system so success is less dependent on the workers making judgment calls on where they will deviate from the work -as-envisaged

Learning in the Real World: Collaboration Beyond Competition

Some of the clearest examples of HOP in practice come from environments where organisations choose learning over reputation management.

While working across offshore and shipyard environments in Brazil (Angra dos Reis / BrasFELS), Singapore, China (Dalian, Qidong and Tianjin), and Malaysia, I observed something that still stands out. [4]

Companies that compete fiercely for major oil and gas contracts, including MODEC, Seatrium, BOMESC, and COSCO SHIPPING, made a conscious decision to collaborate on safety. [4]

There was:

- No regulatory requirement forcing participation

- No commercial obligation to share information

- No expectation of immediate return

Yet leaders, managers, and supervisors stopped work and made time to openly discuss:

- Mishaps and near misses

- System weaknesses

- Innovations being trialled

- Equipment and controls that had failed or succeeded

What mattered was not who caused an issue, but what the system revealed.

“Watching competitors voluntarily share failures across global shipyards was one of the clearest demonstrations of Human and Organisational Performance in action. Learning only works when blame is set aside.”

This is HOP in action:

- Error is treated as information

- Blame is deliberately set aside

- Learning is prioritised over ego

- Safety is viewed as a shared industry responsibility

When Organisations Decide to Become Learning Organisations

Learning organisations do not emerge by accident. They are created by deliberate leadership choices.

In practice, this looks like:

- Leaders being present, not distant

- Time invested in reflection, not just output

- Learning embedded into daily operations, not bolted on after incidents

In several environments, I watched leaders actively create psychological safety by:

- Inviting uncomfortable questions

- Slowing conversations when weak signals emerged

- Acknowledging uncertainty rather than forcing certainty

These behaviours increase organisational capacity and resilience. They allow teams to surface risk early, especially when bias and pressure would otherwise suppress it. [4][5]

Participant reflection: leadership decisions and HOP thinking

A public reflection on how HOP changes the way leaders interpret error, context, and risk in real operations.

Participant view: from “who failed?” to “what shaped the decision?”

A public post describing the practical impact of HOP: improving systems by understanding how real work happens under pressure.

Leadership Is The System

Culture does not live in posters or policies. It lives in everyday leadership behaviour.

The iceberg model is useful here:

- Behaviour sits above the waterline; which is what you can see

- Beneath it: culture → values → beliefs → thoughts/feelings → behaviour

- Leadership behaviour shapes all of it

This is why the question that matters most is not “Are we safe?” but:

“Are we good, or are we just lucky?”

Luck can produce long runs without incidents. Only systems designed for human variability produce resilience. Leadership determines which one an organisation is relying on. [4][5]

Safety Performance Without Illusions

Metrics matter, but they can also mislead.

History provides sobering examples. In the Space Shuttle Columbia disaster, schedule and budget pressure, the normalisation of deviance, and a failure to translate prior lessons from Challenger into daily decision-making contributed to a system that looked stable until it wasn’t. [3][2]

Engineers raised concerns about foam strike risk, but the system struggled to imagine worst-case outcomes, underestimated the possibility of critical damage, and operated inside communication silos and leadership hierarchies that narrowed information flow and authority. [3]

The result was predictable in hindsight: low psychological safety made it harder for dissenting voices to persist at critical moments, especially when speaking up felt like it could jeopardise credibility or career security. [2]

The lesson is uncomfortable but clear:

- Strong performance metrics can coexist with fragile systems

- Resilience must be designed long before something goes wrong

From a HOP perspective:

- Context explains behaviour

- Variability must be expected

- Capacity and resilience must be built deliberately

What High-Performing Organisations Do Differently

Across high-risk industries, organisations that sustain performance over time share common patterns:

- They share uncomfortable data: Learning and improvement are prioritised over image management.

- They design systems around human variability: Error is expected and accounted for, not punished.

- They learn from normal work, not just failure: Successful adaptations are studied, not ignored.

- They treat safety as leadership work: Leadership behaviour sets the context for every decision.

- They invest before harm occurs: Capacity and resilience are built proactively.

- They check for cognitive bias in critical decisions: Especially under urgency or information overload. [6]

Getting Better, Together

“The absence of incidents is not evidence of safety. It is simply evidence that nothing has gone wrong yet.”

In high-risk environments, safety is not a technical problem to be solved. It is a leadership choice made every day.

Human and organisational performance offers a way to move beyond blame and toward understanding. It asks leaders to accept three uncomfortable truths that apply in every high-risk system. [4][5]

- The decisions you make always impact others

- Cognitive bias hides risk

- You are always choosing

Organisations that embrace these truths do not eliminate risk, but they become far better at managing it. That’s getting better, together.

Sources & Notes

- Checker Shadow Illusion (Edward H. Adelson, MIT). Source.

- “Gorillas in our midst” (Simons & Chabris, 1999). Source.

- NASA Significant Incidents (Space Shuttle Columbia: overview & resources). Source.

- Columbia Accident Investigation Board (CAIB) Report, Volume I (PDF). Source.

- The 5 Principles of Human Performance (Todd Conklin) — book listing. Source.

- U.S. Department of Energy: Human Performance Handbook (DOE-HDBK-1028-2009), Volume 1. Source.

- Cognitive Bias Codex (Wikimedia Commons — SVG). Source.

- LinkedIn public posts referenced in the article:

• https://www.linkedin.com/posts/activity-7415260099419484160-3Y97• https://www.linkedin.com/posts/alvesmilena_foi-uma-honra-ser-convidada-para-participar-activity-7343028660813115392-KPuZ• https://www.linkedin.com/posts/omardeandrade_leadership-humanandorganizationalperformance-activity-7362856709599477760-XdEu

- Error traps visual (site asset). Source.

- Iceberg leadership model visual (site asset). Source.